It might be simple, even comforting, to think about that utilizing AI instruments entails interacting with a purely goal, stoic, impartial machine that is aware of nothing about you. However between cookies, machine identifiers, login and account necessities, and even the occasional human reviewer, the voracious urge for food on-line companies have on your knowledge appears insatiable.

Privateness is a serious concern that each customers and governments have in regards to the pervasive unfold of AI. Throughout the board, platforms spotlight their privateness options—even when they’re exhausting to seek out. (Paid and enterprise plans usually exclude coaching on submitted knowledge solely.) However any time a chatbot “remembers” one thing can nonetheless really feel intrusive.

On this article, we are going to clarify the way to tighten your AI privateness settings by deleting your earlier chats and conversations and by turning off settings in ChatGPT, Gemini (previously Bard), Claude, Copilot, and Meta AI that enable builders to coach their programs in your knowledge. These directions are for the desktop, browser-based interface for every.

ChatGPT

Nonetheless the flagship of the generative AI motion, OpenAI’s ChatGPT has a number of options to enhance privateness and alleviate issues about person prompts getting used to coach the chatbot.

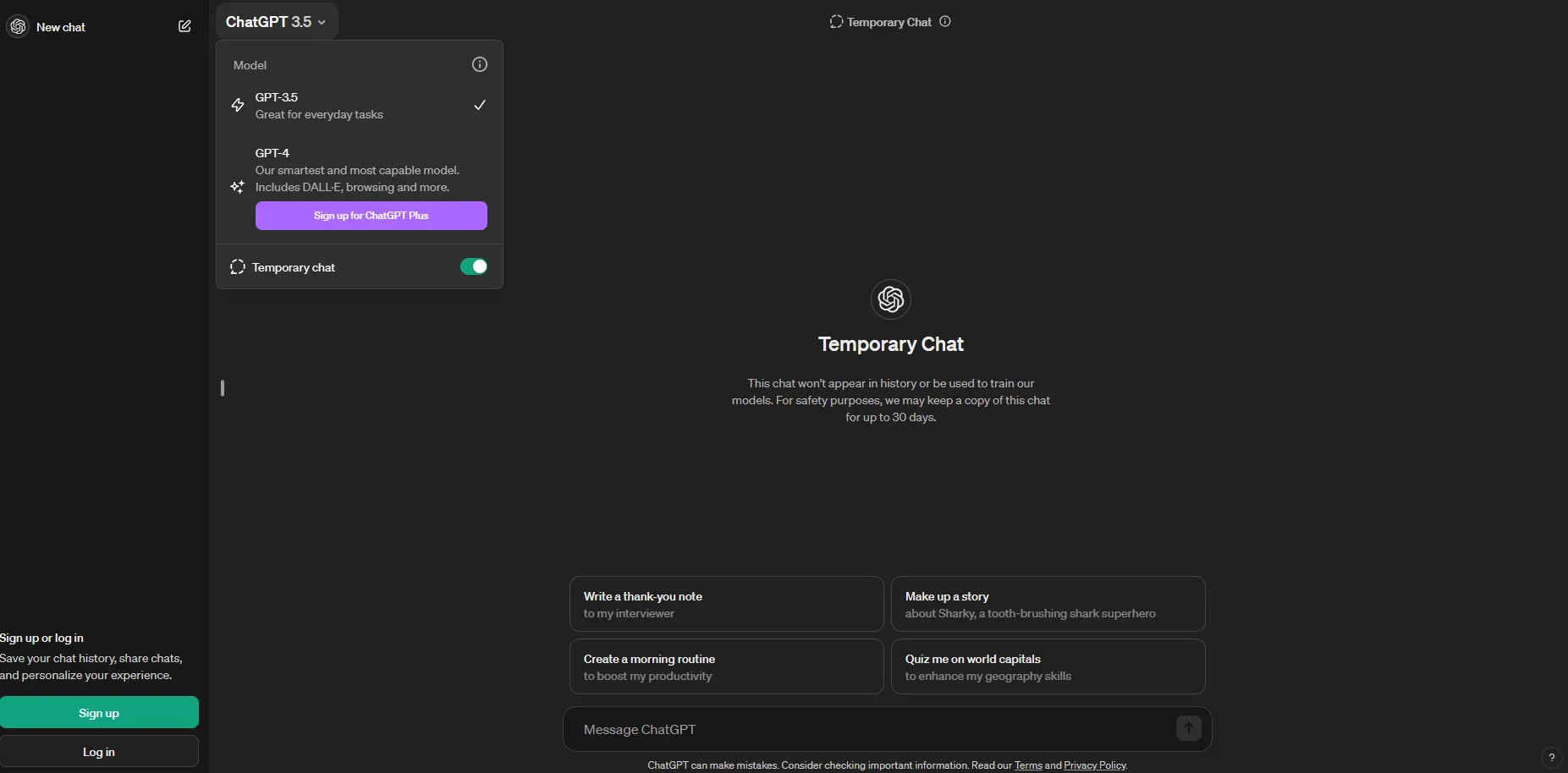

In April, OpenAI introduced that ChatGPT may very well be used with out an account. By default, prompts shared by way of the free, account-less model aren’t saved. However, if a person doesn’t need their chats used to coach ChatGPT, they nonetheless must toggle the “Momentary chat” settings within the ChatGPT dropdown menu on the prime left of the display.

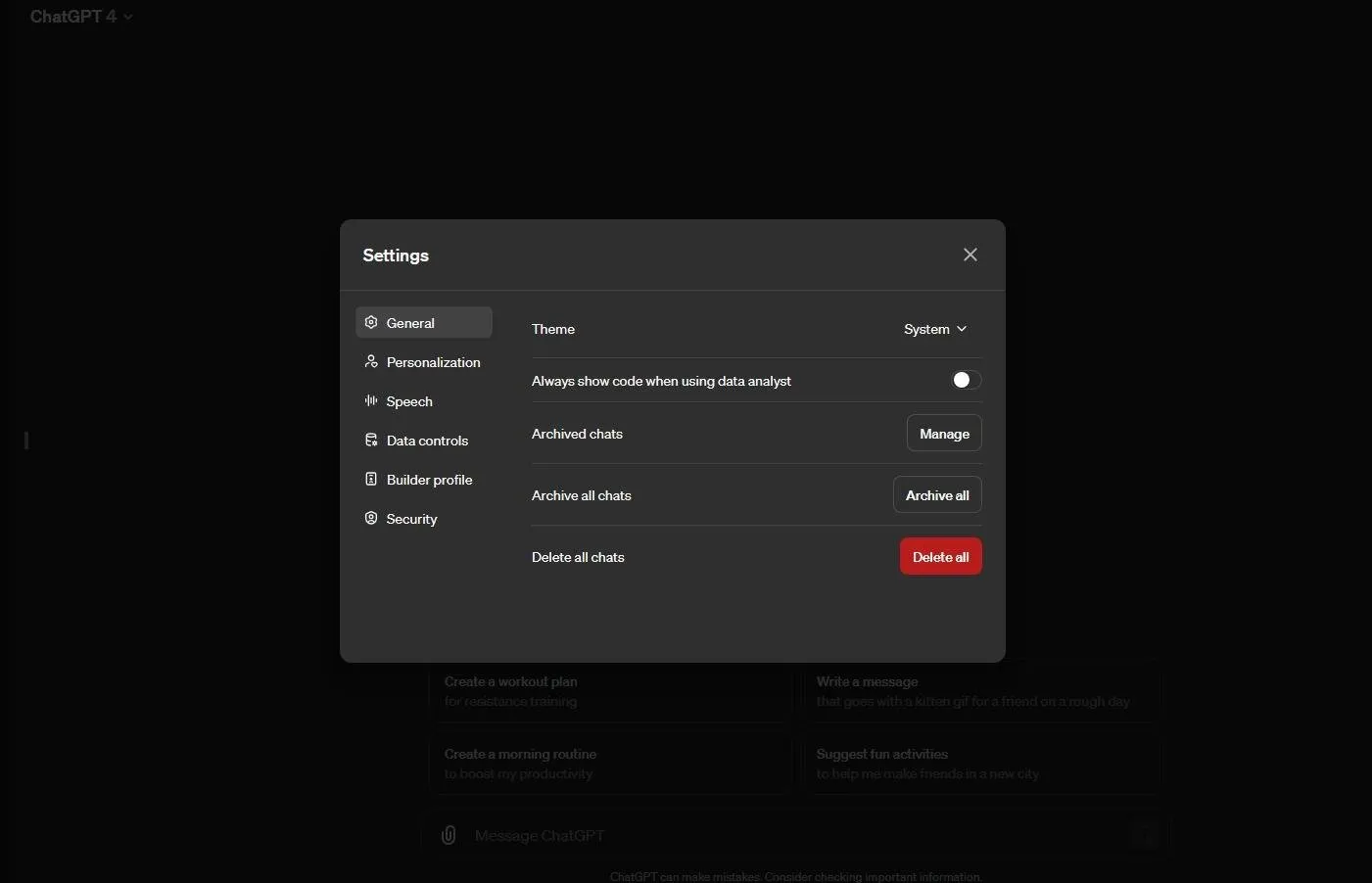

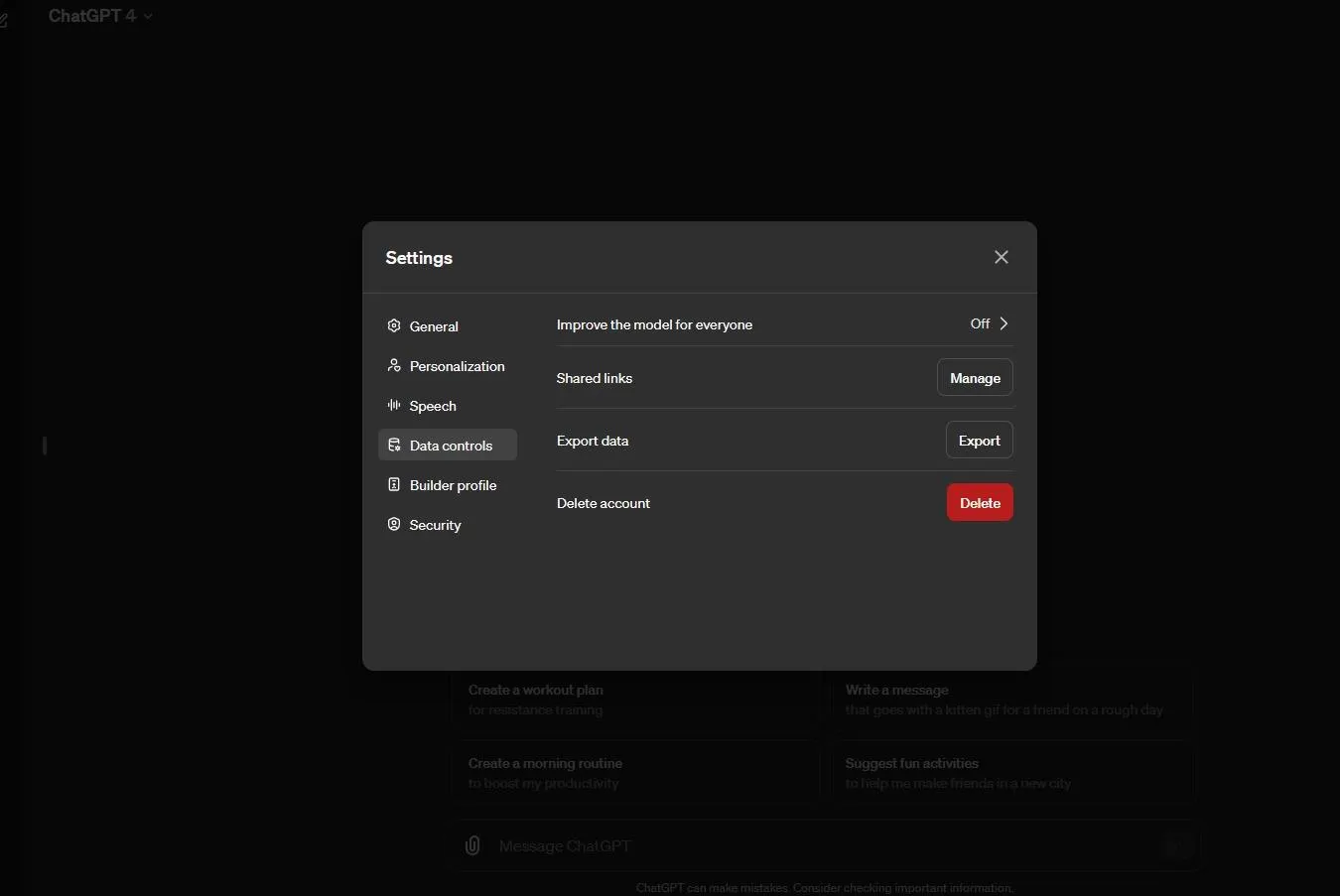

If in case you have an account and subscription to ChatGPT Plus, nevertheless, how do you retain your prompts from getting used? GPT-4 provides customers the power to delete all chats underneath its basic settings. Once more, to verify chats are additionally not used to coach the AI mannequin, look decrease to “Information controls” and click on the arrow to the correct of “Enhance the mannequin for everybody.”

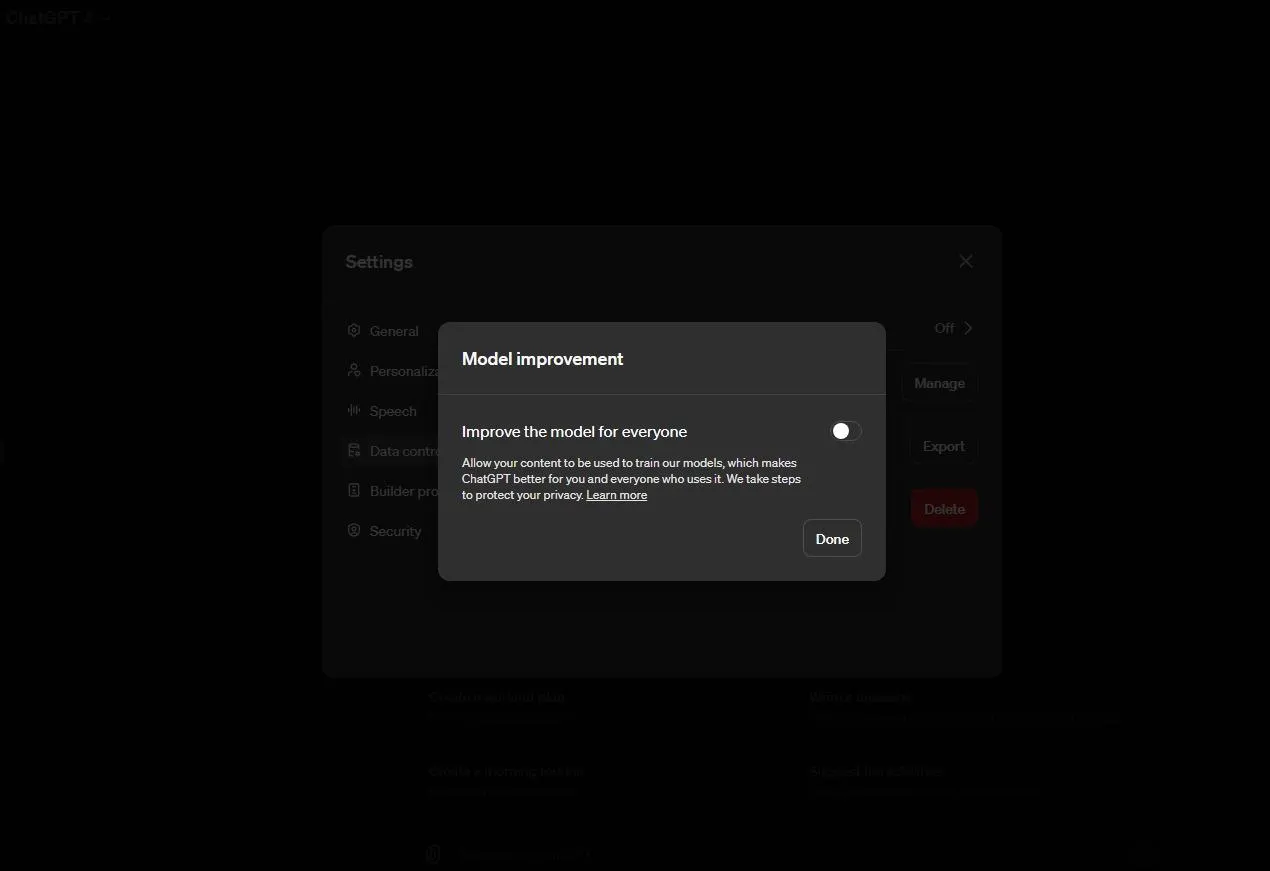

A separate “Mannequin enchancment” part will seem, permitting you to toggle it off and choose “Executed.” This can take away the power of OpenAI to make use of your chats to coach ChatGPT.

There are nonetheless caveats, nevertheless.

“Whereas historical past is disabled, new conversations gained’t be used to coach and enhance our fashions, and gained’t seem within the historical past sidebar,” an OpenAI spokesperson informed Decrypt. “To observe for abuse—and reviewed solely when we have to—we are going to retain all conversations for 30 days earlier than completely deleting.”

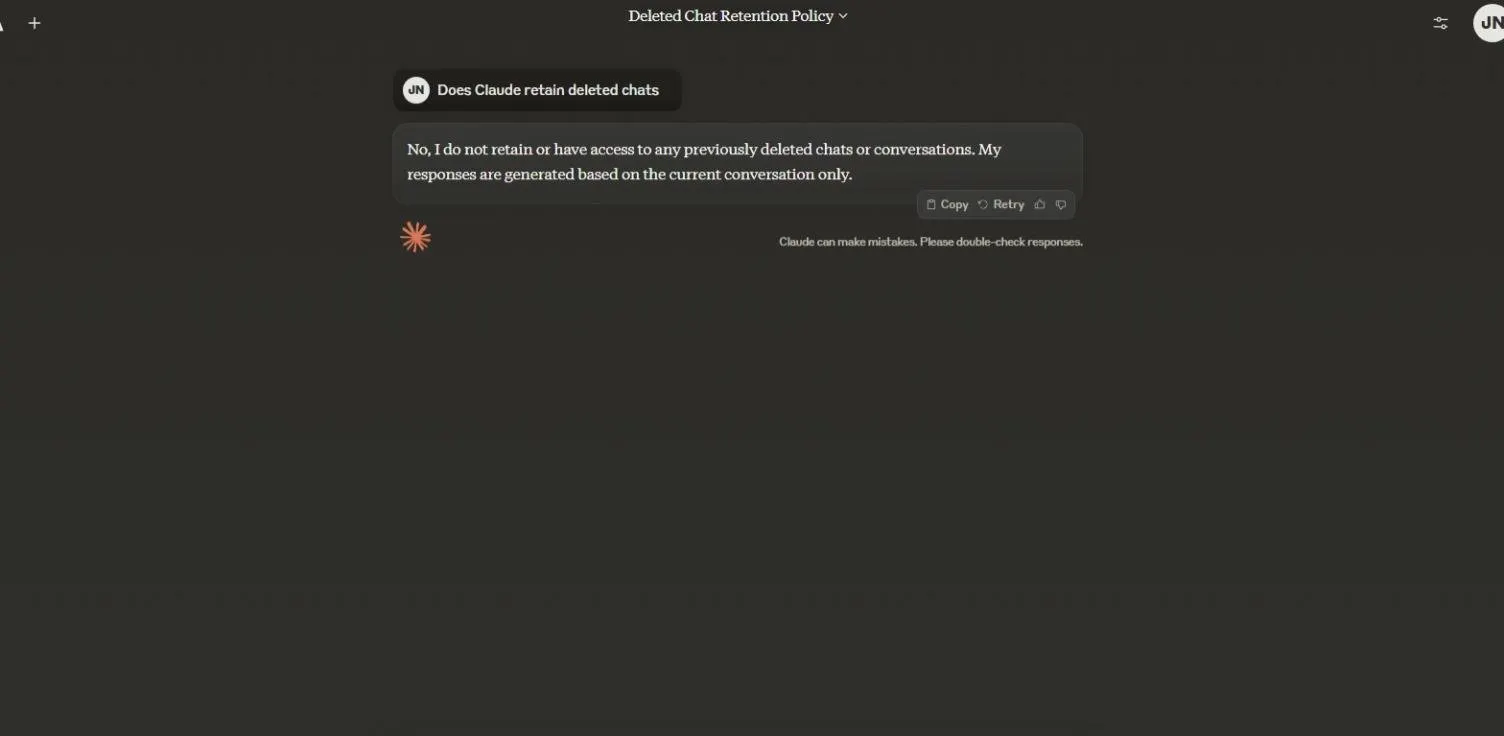

Claude

“We don’t practice our fashions on user-submitted knowledge by default,” an Anthropic spokesperson informed Decrypt. “Up to now, now we have not used any buyer or user-submitted knowledge to coach our generative fashions, and we’ve expressly acknowledged so within the mannequin card for our Claude 3 mannequin household,”

“We could use person prompts and outputs to coach Claude the place the person provides us specific permission to take action, comparable to clicking a thumbs up or down sign, on a particular Claude output to supply us suggestions,” the corporate added, noting that it helps the AI mannequin “study the patterns and connections between phrases.”

Deleting archived chats in Claude will even preserve them out of attain. “I don’t retain or have entry to any beforehand deleted chats or conversations,” the Claude AI agent helpfully solutions within the first individual. ”My responses are generated primarily based on the present dialog solely.”

Like ChatGPT, Claude does maintain on to some info as required by regulation.

“We additionally retain knowledge in our backend programs for the period of time laid out in our Privateness Coverage until required to implement our Acceptable Use Coverage, handle Phrases of Service or coverage violations, or as required by regulation,” Anthropic explains.

As for Claude’s assortment of knowledge throughout the net, an Anthropic spokesperson informed Decrypt that the AI developer’s internet crawler respects industry-standard technical alerts like robots.txt that web site homeowners can use to opt-out of information assortment, and that Anthropic doesn’t entry password-protected pages or bypass CAPTCHA controls.

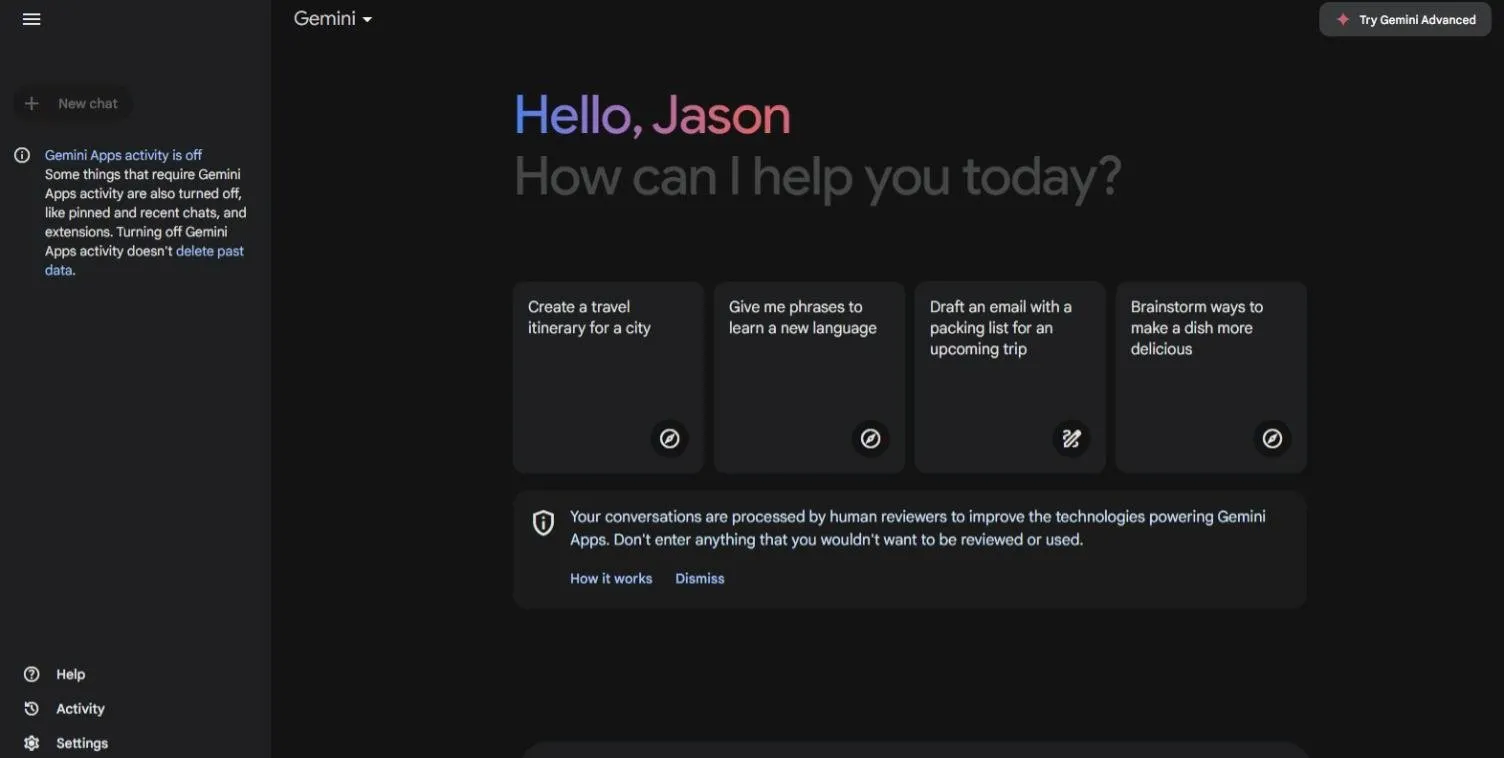

Gemini

By default, Google tells Gemini customers that “your conversations are processed by human reviewers to enhance the applied sciences powering Gemini Apps. Do not enter something that you just would not need to be reviewed or used.”

However Gemini AI customers can delete their chatbot historical past and decide out of getting their knowledge used to coach its mannequin going ahead.

To perform each, navigate to the underside left of the Gemini homepage and find “Exercise.”

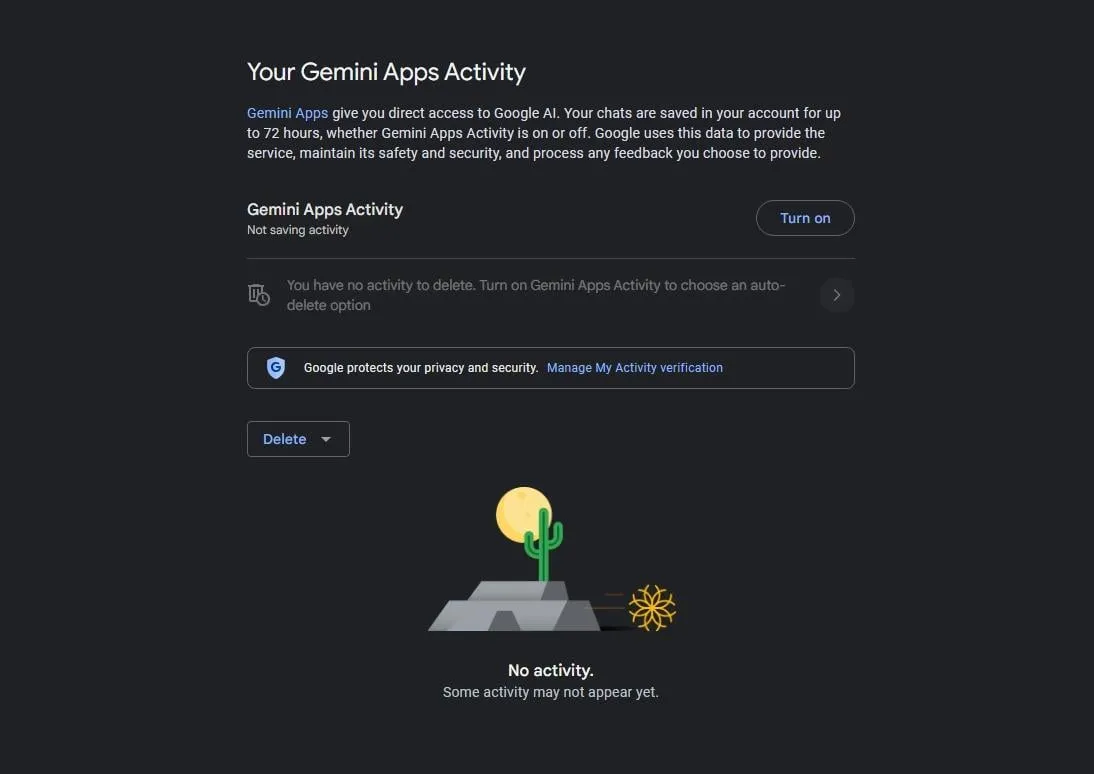

As soon as on the exercise display, customers can then flip off “Gemini Apps Exercise.”

A Google consultant defined to Decrypt what the “Gemini Apps Exercise” setting does.

“When you flip it off, your future conversations gained’t be used to enhance our generative machine-learning fashions by default,” the corporate consultant stated. “On this occasion, your conversations shall be saved for as much as 72 hours to permit us to supply the service and course of any suggestions you could select to supply. In these 72 hours, until a person chooses to offer suggestions in Gemini Apps, it gained’t be used to enhance Google’s merchandise, together with our machine studying expertise.”

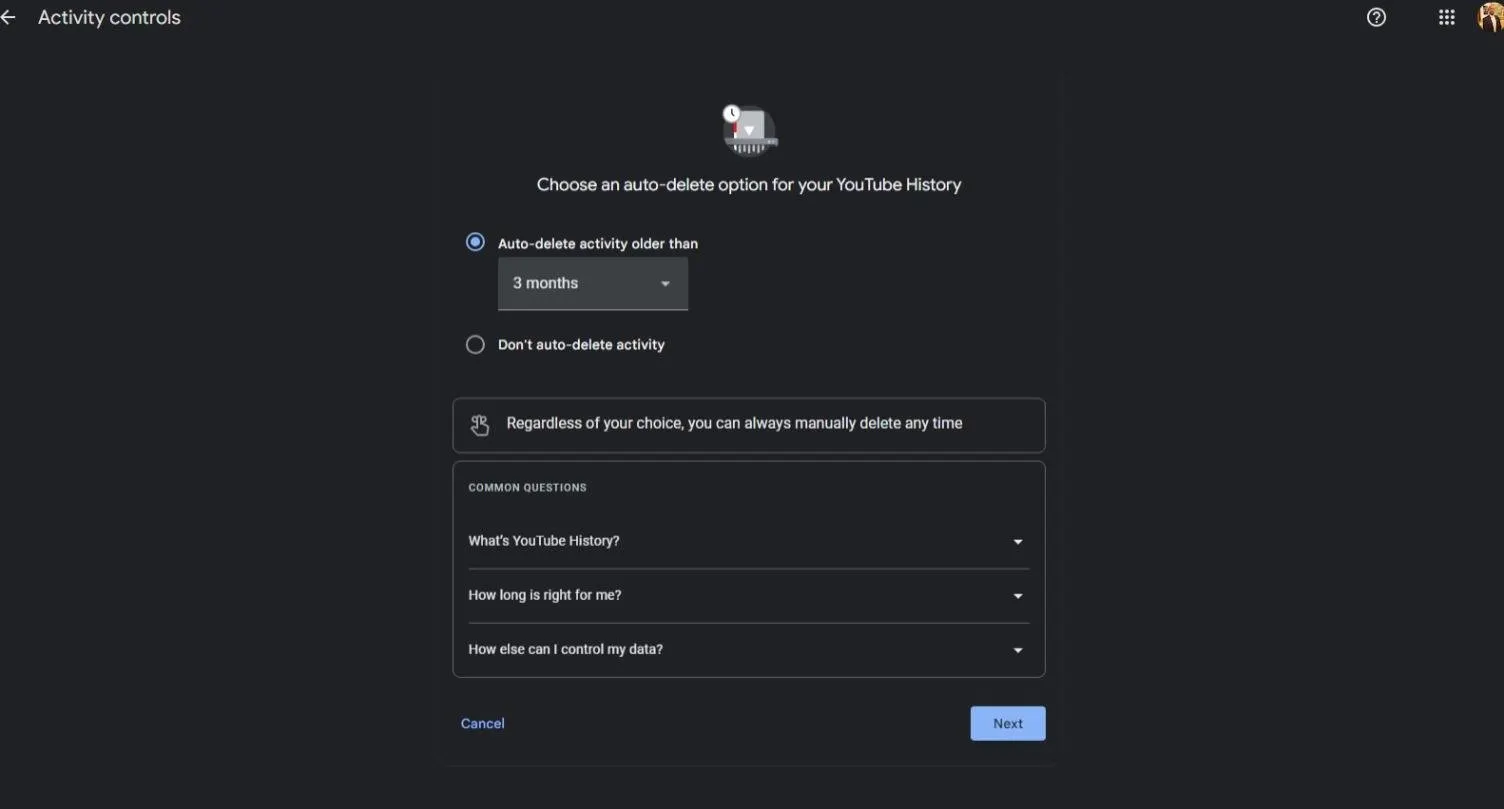

There’s additionally a separate setting to filter out your Google-connected YouTube historical past.

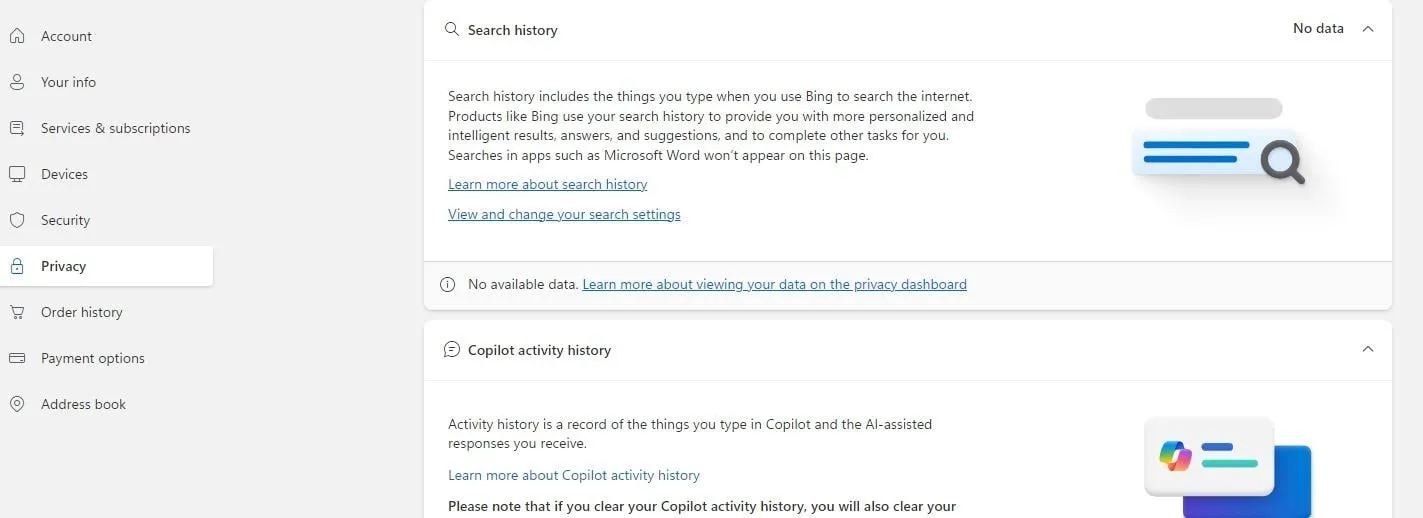

Copilot

In September, Microsoft added its Copilot generative AI mannequin to its Microsoft 365 suite of enterprise instruments, its Microsoft Edge browser, and Bing search engine. Microsoft additionally included a preview model of the chatbot in Home windows 11. In December, Copilot was added to the Android and Apple app shops.

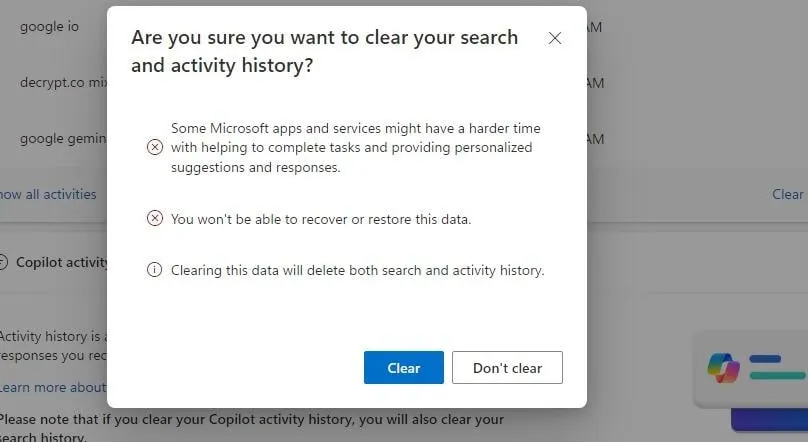

Microsoft doesn’t present the choice to decide out of getting person knowledge used to coach its AI fashions, however like Google Gemini, Copilot customers can delete their historical past. The method will not be as intuitive on Copilot, nevertheless, as earlier chats nonetheless present on the desktop model’s house display even after being deleted.

To search out the choice to delete Copilot historical past, open your person profile on the prime proper of your display (you have to be signed in) and choose “My Microsoft Account.” On the left, choose “Privateness,” and scroll right down to the underside of the display to seek out the Copilot part.

As a result of Copilot is built-in into Bing’s search engine, clearing exercise will even clear search historical past, Microsoft stated.

A Microsoft spokesperson informed Decrypt that the tech large protects customers’ knowledge by way of numerous strategies, together with encryption, deidentification, and solely storing and retaining info related to the person for so long as is critical.

“A portion of the full variety of person prompts in Copilot and Copilot Professional responses are used to fine-tune the expertise,” the spokesperson added. “Microsoft takes steps to de-identify knowledge earlier than it’s used, serving to to guard shopper id,” including that Microsoft doesn’t use any content material created in Microsoft 365 (Phrase, Excel, PowerPoint, Outlook, Groups) to coach underlying “foundational fashions.”

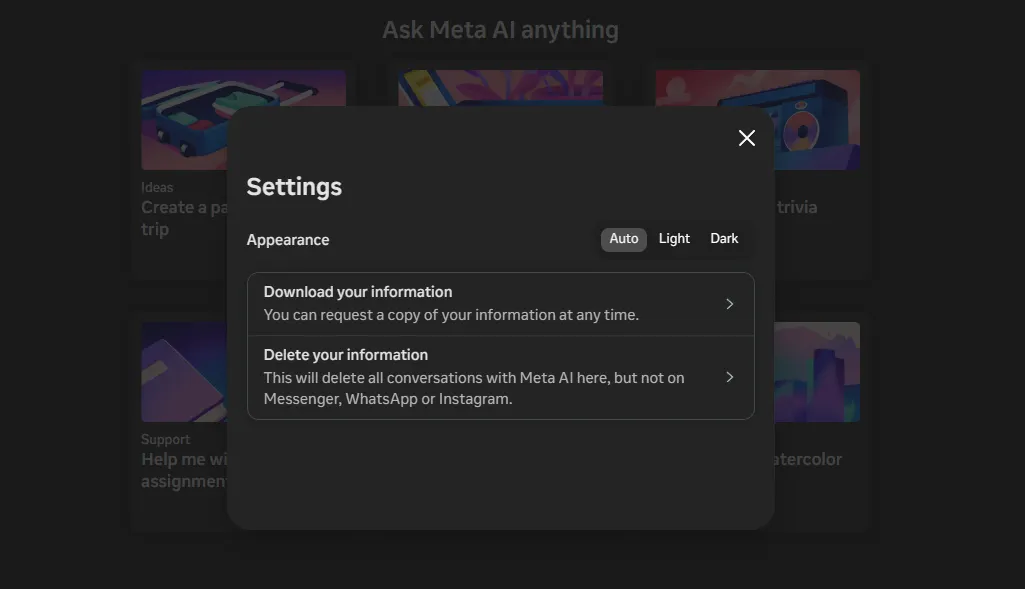

Meta AI

In April, Meta—the dad or mum firm of Fb, Instagram, and WhatsApp—rolled out Meta AI to customers.

“We’re releasing the brand new model of Meta AI, our assistant, that you could ask any query throughout our apps and glasses,” Zuckerberg stated in an Instagram video. “Our aim is to construct the world’s main AI and make it accessible to everybody.”

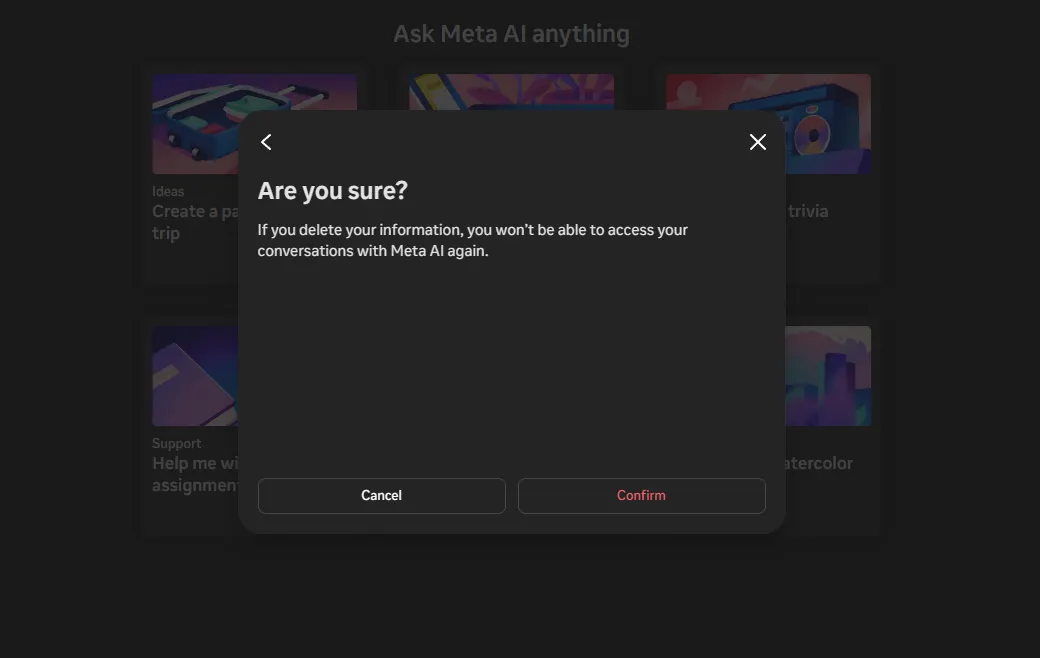

Meta AI doesn’t present customers the choice to decide out of getting their inputs used to coach the AI mannequin. Meta does give the choice to delete previous chats with its AI agent.

To take action from a desktop laptop, click on the Fb settings tab on the backside left of your display, situated above your Fb profile picture. As soon as in settings, customers have the choice to delete conversations with Meta AI.

Meta does clarify that deleting conversations right here is not going to delete chats with different folks in Messenger, Instagram, or WhatsApp.

A Meta spokesperson declined to touch upon whether or not or how customers may exclude their info from being utilized in Meta AI mannequin coaching, as a substitute pointing Decrypt to a September assertion by the corporate about its privateness safeguards and the Meta settings web page on deleting historical past.

“Publicly shared posts from Instagram and Fb—together with images and textual content—have been a part of the info used to coach the generative AI fashions,” the corporate explains. “We didn’t practice these fashions utilizing folks’s non-public posts. We additionally don’t use the content material of your non-public messages with family and friends to coach our AIs.”

However something you ship to Meta AI shall be used for mannequin coaching—and past.

“We use the data folks share when interacting with our generative AI options, comparable to Meta AI or companies who use generative AI, to enhance our merchandise and for different functions,” Meta provides.

Conclusion

Of the main AI fashions we included above, OpenAI’s ChatGPT supplied the best strategy to delete historical past and opt-out of getting chatbot prompts used to coach its AI mannequin. Meta’s privateness practices seem like essentially the most opaque.

Many of those firms additionally present cell variations of their highly effective apps, which offer related controls. The person steps could also be totally different—and privateness and historical past settings could perform otherwise throughout platforms.

Sadly, even cranking all privateness settings to their tightest ranges is probably not sufficient to safeguard your info, based on Venice AI founder and CEO Erik Voorhees, who informed Decrypt that it might be naive to imagine your knowledge has been erased.

“As soon as an organization has your info, you may by no means belief it’s gone, ever,” he stated. “Individuals ought to assume that all the pieces they write to OpenAI goes to them and that they’ve it eternally.”

“The one strategy to resolve that’s by utilizing a service the place the data doesn’t go to a central repository in any respect within the first place,” Voorhees added—a service like his personal.

Edited by Ryan Ozawa.